Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Do you keep finding yourself opening ChatGPT for every simple thing without thinking it through first?

If yes, the reason is usually cognitive offloading. Cognitive offloading means shifting mental effort to an external tool instead of processing it internally. When ChatGPT repeatedly gives fast, structured, useful answers, your brain learns that skipping effort saves time and reduces discomfort.

Over time, this creates a habit loop. Question → AI → instant clarity → relief. The relief reinforces the behavior. Eventually, opening ChatGPT becomes automatic, even for simple problems.

This is not laziness. It is adaptation to an environment where answers are immediate and uncertainty feels unnecessary. Today, with AI integrated into school, work, and daily life, this pattern is increasingly common.

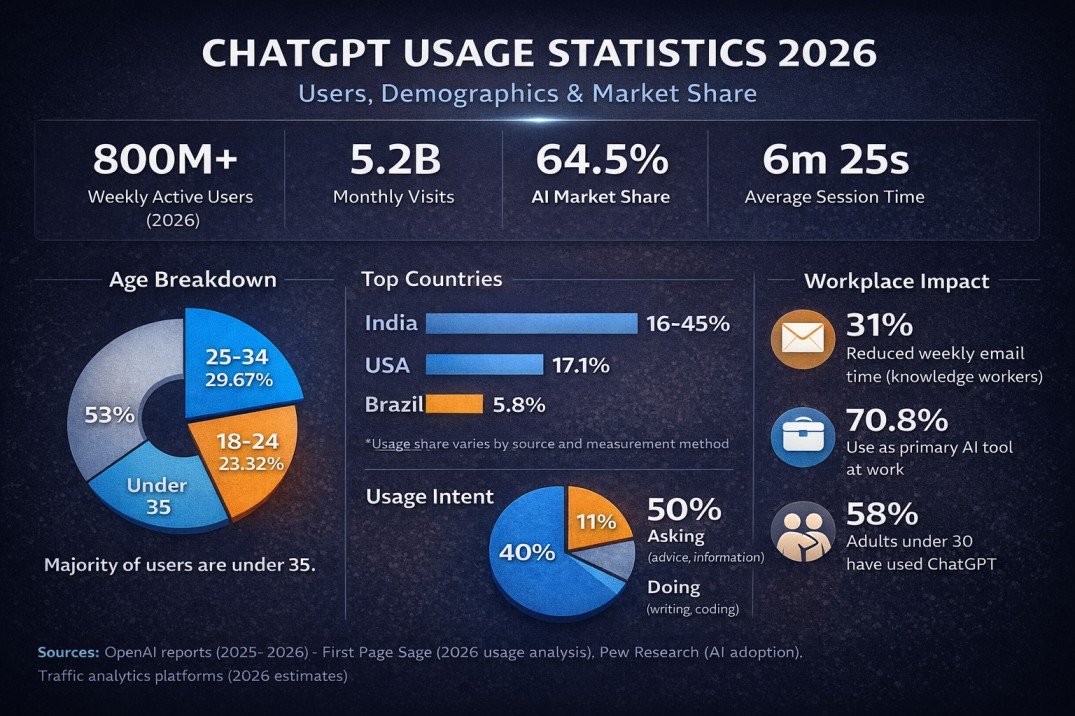

By 2025, using generative AI tools like ChatGPT has become common among young adults in the United States. Research from Pew Research Center shows that AI adoption is especially high among adults under 30, many of whom use it for schoolwork, writing, and everyday questions.

For college students, it often starts with an assignment. You’re stuck, you check ChatGPT for structure or clarification, and it works. The assignment is accepted. The grade is fine. That builds trust.

For young professionals, it begins with speed. Drafting emails faster, summarizing meetings, generating ideas quickly. When your output improves, AI becomes associated with competence.

There is no dramatic shift. Just repeated positive outcomes. Over time, opening ChatGPT feels normal, not exceptional.

You had an assignment due.

You weren’t fully clear on the topic, or you didn’t know how to start. Instead of sitting there staring at a blank page, you opened ChatGPT and asked it to explain the concept or give you a basic structure.

It responded quickly. The explanation made sense. The outline looked organized.

You used it as a starting point. You rewrote parts. You adjusted it. You submitted the work.

Your grade was normal. Nothing went wrong.

That’s usually how it begins.

You didn’t think, “I’m going to rely on AI now.” You just learned that when you feel stuck, this tool gives you a fast way forward. After a few experiences like that, opening ChatGPT starts to feel like a standard step, not a shortcut.

At work, it usually begins with speed.

You had too many emails to reply to. You asked ChatGPT to help you draft one. It sounded clear and professional. You adjusted a few lines and sent it.

Later, you used it to summarize meeting notes. Then to structure a report. Then to outline a presentation. Tasks that normally took longer started moving faster.

Your output improved. You missed fewer details. You hit deadlines more easily.

Maybe your manager said your writing looked sharper. Maybe your turnaround time improved. Maybe you just felt less overwhelmed.

Nothing about it felt risky. It felt efficient.

After enough moments like that, your brain starts linking AI with performance. When you need to move quickly or avoid mistakes, opening ChatGPT feels like a practical decision. Over time, it becomes part of how you work.

At first, you used ChatGPT when you were stuck. It was occasional. Situational.

Over time, something small changes. You stop opening it only when you’re blocked. You start opening it before you’ve even tried to think through the problem yourself.

You don’t sit with the question. You don’t outline rough ideas. You don’t test your own thinking first. You go straight to the tool.

That’s the shift.

AI use becomes dependence not when you use it often, but when it becomes your automatic first step. When opening ChatGPT feels easier than thinking for a few minutes. When the reflex kicks in before effort does.

You might notice it in small ways:

It doesn’t feel dramatic. It feels efficient.

But when a tool moves from “help when needed” to “start here every time,” that’s when it quietly becomes the default.

This shift usually happens quietly.

You open your laptop. A task appears. Instead of thinking through it for a few minutes, you open ChatGPT immediately. You ask for structure, ideas, or a solution before forming your own.

Over time, that order flips. AI comes first. Your thinking comes second.

It doesn’t mean you can’t think. It means your brain has learned to avoid the brief discomfort of not knowing where to start. When that discomfort is removed repeatedly, starting alone begins to feel harder than it actually is.

“Isn’t this just efficiency?”

That’s usually how people frame it. If ChatGPT helps you finish faster, write better, or think more clearly, it doesn’t feel harmful. It feels practical.

“Why doesn’t this feel like a problem?”

Because there are no immediate downsides. Your work gets done. Your grade is fine. Your boss is satisfied. You save time. There’s no visible penalty for relying on AI first.

“If it helps me solve things, what’s the issue?”

The issue isn’t that it helps. The issue is that you may stop engaging with the problem before asking for help. When every small uncertainty is resolved externally, your tolerance for working through confusion on your own can gradually decrease.

It feels harmless because the consequences aren’t immediate. The shift is gradual, which makes it easy to justify and easy to miss.

If you’re in college or early in your career, you’re used to evaluating information quickly. Deadlines are tight. Decisions move fast. You don’t always have time to deeply verify everything.

So when ChatGPT gives you a clean, structured answer, it feels reliable almost immediately.

That trust doesn’t form because you studied the answer. It forms because of how it’s delivered.

When something sounds clear and complete, your brain relaxes. Clarity reduces doubt. Doubt requires effort.

That’s why you may believe AI answers faster than you realize.

ChatGPT sounds certain.

It doesn’t hesitate.

It doesn’t ramble.

It doesn’t show visible confusion.

It gives complete sentences and logical steps.

Your brain interprets that as expertise.

In real life, confident speakers are often perceived as more knowledgeable. The same bias applies here. Even when a topic is complex, the tone stays steady. That steadiness feels like competence.

It’s not that AI is intentionally “faking” confidence. It’s that fluent language signals authority automatically.

And when you’re tired, busy, or slightly stressed, fluent authority feels safe.

This matters more than people admit.

If the topic is outside your expertise, you don’t have internal reference points. You can’t easily tell if:

So you rely on presentation.

If it sounds structured, it feels correct. If it flows smoothly, it feels true.

That’s why questions like “How do I know if ChatGPT is wrong?” show up so often. The difficulty isn’t that errors are constant. It’s that subtle errors are hard to detect without background knowledge.

The more you use AI in areas where you lack depth, the more tone replaces verification.

And when tone replaces verification consistently, trust becomes automatic.

When you don’t know something, your brain doesn’t stay neutral. It reacts.

An unfinished thought, a blank page, a question you can’t resolve, all of these create tension. Not panic. Just mild internal pressure.

That pressure feels like:

If you’re juggling assignments, work tasks, social decisions, and constant notifications, that small tension feels unnecessary. You already have enough to process.

So when ChatGPT removes that tension instantly, your brain notices.

You don’t just get an answer. You get relief.

Confusion is uncomfortable.

When you’re staring at a problem and don’t know where to start, your brain experiences uncertainty as a small stress signal. It prefers clarity. It prefers closure.

That’s why:

Sitting with not knowing requires patience. It requires effort. And in a fast digital environment, patience feels inefficient.

So instead of tolerating uncertainty, you remove it.

When you ask ChatGPT and receive a clear answer in seconds, the tension drops.

The blank page is no longer blank.

The confusion is replaced with structure.

The uncertainty feels resolved.

That drop in tension is rewarding.

The more often you experience that quick relief, the more your brain starts associating AI with comfort. Not just usefulness. Comfort.

That’s why asking AI can feel satisfying. Not because it’s dramatic or addictive in a clinical sense, but because it consistently replaces uncertainty with clarity.

And once your brain learns that pattern, question, answer, relief, it prefers it.

That’s where the habit deepens.

At first, you use ChatGPT for tasks.

Then you start using it for questions.

Not academic questions. Not work questions. Personal ones.

You ask things you wouldn’t necessarily Google:

It doesn’t judge. It doesn’t get impatient. It responds immediately.

That’s when the interaction shifts from practical to personal.

Asking ChatGPT about dating, friendships, or everyday decisions isn’t unusual anymore. Many people do it because it’s fast, private, and structured. The tool doesn’t interrupt you. It doesn’t misinterpret tone. It gives you scenarios and explanations.

Over time, that predictability feels reliable.

ChatGPT often responds with structured scenarios:

When you read those, one of them usually feels accurate.

That moment of recognition builds trust.

It feels like understanding, even though it’s pattern-based generation. The language is conversational. The tone is neutral. The reasoning sounds balanced.

Because it mirrors how a calm, thoughtful person might respond, it can feel human.

The system doesn’t actually “understand” you in an emotional sense. But when it reflects possibilities clearly and without judgment, it feels relatable.

And when something feels relatable, trust increases.

That’s when AI shifts from tool to sounding board.

Regularly turning to ChatGPT for simple problems can change how you experience mental effort. The change is not about losing intelligence. It is about how often you practice working through uncertainty on your own.

When confusion is resolved instantly every time, your tolerance for unresolved thinking can decrease. That’s why some people start asking whether AI is affecting their sharpness or attention span. The impact is gradual, not dramatic.

Struggling briefly with a problem activates deeper processing in the brain. When you try to retrieve information, organize ideas, or test explanations, you strengthen memory pathways. Effort increases retention.

Confusion feels uncomfortable because your brain prefers closure. Sitting with not knowing requires patience and working memory. That early friction is often where understanding forms.

Thinking through a problem before seeing the answer improves recall, problem-solving flexibility, and long-term learning. When effort is skipped entirely, understanding can become more surface-level.

Repeatedly bypassing effort can reduce mental stamina. If you rarely attempt problems independently, starting without assistance may begin to feel harder than it actually is.

Over time, this pattern can show up as:

Using ChatGPT does not automatically harm learning. The risk appears when independent effort is consistently replaced rather than supported. Mental endurance grows through use. If it is used less, it can feel weaker.

Using ChatGPT is not automatically unhealthy. The difference lies in how and when you use it.

If you’re wondering whether you’re dependent on ChatGPT, the question to ask is not how often you use it, but whether you can comfortably start without it.

AI use becomes unhealthy when it replaces independent effort entirely, not when it supports it. The tool itself is not harmful to your brain. The pattern of skipping engagement every time is what can reduce mental stamina.

Dependency is about reflex, not frequency.

A healthy way to use ChatGPT includes effort before assistance.

You try first.

You outline rough ideas.

You attempt to solve the problem briefly.

Then you use AI to refine, expand, or clarify.

This keeps your thinking active.

Trying before asking AI strengthens recall and reasoning. Using AI after that can sharpen understanding rather than replace it.

Healthy use feels supportive. You can work without it if needed. AI improves your output, but it does not feel required for you to begin.

Reflexive dependence looks different.

You open ChatGPT immediately when a task appears.

You feel uneasy starting alone.

You hesitate when AI is unavailable.

You feel less confident thinking without external structure.

This doesn’t automatically mean addiction. But if you panic when the tool is down or feel stuck without it, that suggests the habit has shifted from support to reliance.

The key signal is emotional response. If the absence of AI creates stress or avoidance, the pattern may be stronger than you realized.

Support strengthens your thinking. Dependence replaces it.

Not everyone who uses ChatGPT frequently has a problem.

You should not worry simply because you use AI daily. The concern begins when reliance changes your emotional state or decision-making patterns.

AI dependence becomes a real issue when:

Sometimes what feels like “AI dependence” is just habit. Other times, it may be masking stress, burnout, or avoidance.

The key difference is this: does the tool support your thinking, or is it replacing areas of life that require personal reflection and human nuance?

Avoiding thinking is not about intelligence. It’s often about discomfort.

If you notice that you:

then the pattern may be more about avoiding mental strain than improving efficiency.

In some cases, heavy AI use can also mask burnout. When you’re mentally tired, outsourcing thinking feels relieving. But relief is not the same as restoration.

Anxiety without AI can signal that the tool has become your regulator, not just your assistant.

ChatGPT works well for structure, summaries, brainstorming, and productivity. It requires caution in areas that depend on personal context.

Be careful when using AI for:

AI generates responses from patterns in data. It does not know your history, tone, or the internal thoughts of other people. Confident explanations may feel accurate because they match common scenarios, not because they reflect your exact situation.

Use AI to organize thinking. Do not let it define personal reality.

Relying on ChatGPT before attempting simple problems alone is a behavioral shift, not a permanent change in your ability.

Using AI does not automatically reduce intelligence or critical thinking. The effect depends on how often independent effort is replaced. If you still engage your own reasoning regularly, your skills remain active.

There is no need to stop using AI entirely. The more relevant question is whether you can begin tasks, think through uncertainty, and form initial ideas without immediate assistance.

If that step has been skipped, it can be reintroduced. Independent thinking strengthens with repetition. The pattern is adjustable.

Balance comes from deciding when to think first and when to use a tool.

Relying on ChatGPT can reduce problem-solving strength if it consistently replaces independent effort. Skills weaken when they are not practiced. If you attempt problems first and use AI for refinement, your reasoning remains active and less likely to decline.

AI use becomes excessive when you feel unable to start tasks without it or experience stress when it’s unavailable. The issue is not frequency alone. The concern begins when AI becomes the automatic first step in nearly every situation.

Using ChatGPT for studying can affect memory retention if you skip retrieval and effort. Memory strengthens when you attempt recall before seeing the answer. Reviewing AI-generated explanations without trying first may lead to shallow understanding rather than durable learning.

Independent thinking can be strengthened again through repetition. Attempting tasks alone, sitting briefly with uncertainty, and forming rough ideas before seeking assistance gradually rebuild mental stamina. Cognitive skills respond to consistent practice.

Asking AI for perspective is not automatically unhealthy, but it requires caution. AI generates responses from general patterns, not from direct knowledge of your emotional history or another person’s intentions. Personal and relational decisions require context that AI cannot fully access.